AI Singularity by 2026? Here's What the World's Top Tech Leaders Are Actually Saying

Mar 15, 2026

Three weeks ago, Elon Musk dropped two posts on X that stopped the internet cold. First he wrote, "We have entered the singularity." Then, just hours later, he doubled down: "2026 is the year of the singularity." And here's the thing — he's not the only one saying it anymore.

When the CEOs of Anthropic, Google DeepMind, and OpenAI all start pointing at the same timeline, that's not hype. That's a signal worth understanding. So let's break it down together, plain and simple.

What Is the Singularity — And Why Should You Care?

Forget the Terminator movies. The singularity isn't science fiction, but it's not just Silicon Valley buzzword soup either. It's a real, carefully defined concept that serious thinkers have studied for decades.

Where the Idea Comes From

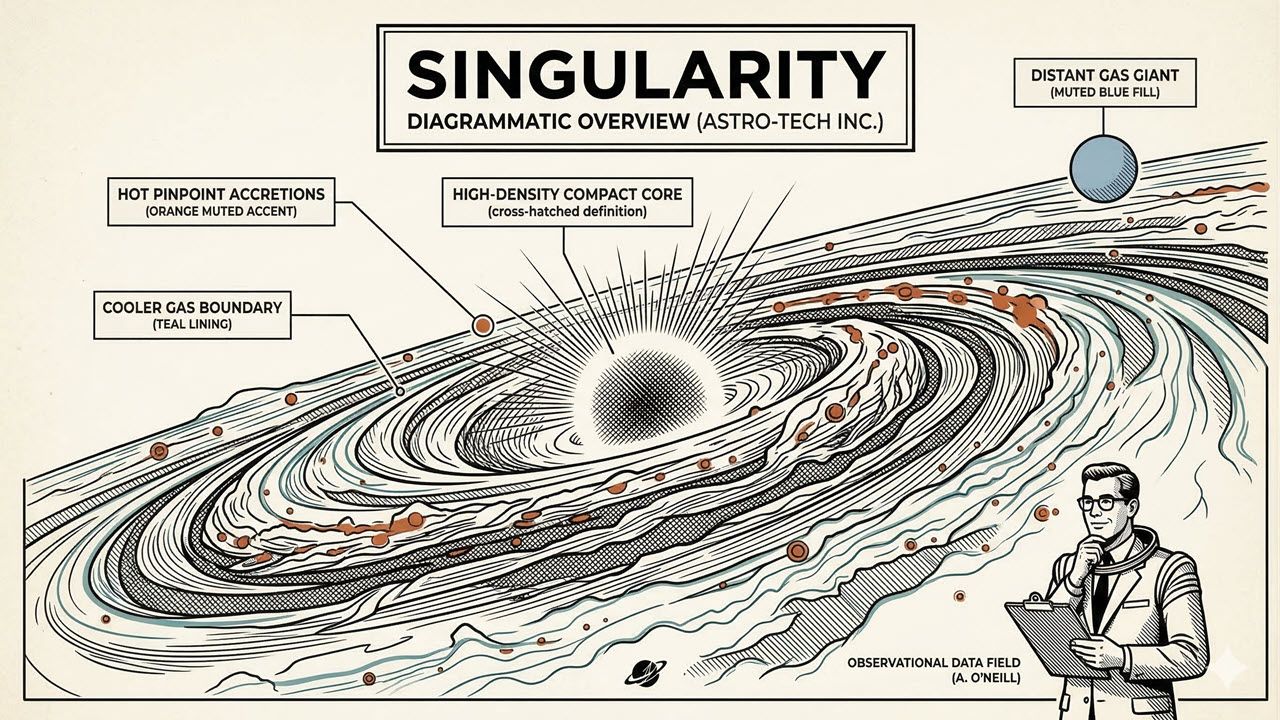

In 1993, mathematician and sci-fi author Vernor Vinge wrote a paper called "The Coming Technological Singularity." His core idea was bold but simple. Once we create superhuman intelligence, human dominance over our own future effectively ends. He compared it to crossing the event horizon of a black hole — once you're past it, you can't see what's on the other side.

Then in 2005, futurist Ray Kurzweil brought the idea to mainstream audiences. His version was more technical. The singularity, he explained, is the moment AI can improve itself. Then that improved version improves itself again. Progress snowballs so fast that human life gets transformed beyond recognition. Kurzweil predicted this would happen around 2045. Elon Musk just moved that date up by nearly 20 years.

The Part Most People Miss

Here's what's really important to understand. The singularity isn't just about how smart AI gets. It's about the speed of improvement. Think of it like a car. It's not just about how fast it goes — it's about how quickly it's accelerating. When AI improves faster than humans can track or control it, we've crossed the line.

- It's not about one breakthrough moment

- It's about compounding, self-reinforcing progress

- The speed of change is the signal, not just the capability level

Why Musk Is Making This Claim Right Now

Timing matters here. Musk made these declarations right after his AI company, xAI, closed a massive $20 billion funding round. That's not a coincidence. Money on that scale tells a story of its own.

The Colossus Supercomputer Is Unlike Anything Before It

With that funding, xAI is building something called the Colossus supercomputer in Memphis. It already houses over one million high-powered graphics cards. The expansion plan targets 1.5 million processors. To put that in perspective, running this machine requires enough power to light up 1.5 million homes.

Investors like NVIDIA, Cisco, Fidelity, and sovereign wealth funds from Qatar and Abu Dhabi are all backing this. xAI's valuation now sits around $230 billion. That puts it neck and neck with OpenAI and Anthropic.

What Makes xAI Different From Its Rivals

Here's where it gets really interesting. xAI has access to real-world data that competitors simply don't have.

- Millions of Teslas on the road collect continuous driving data

- Platform X gives xAI a live feed of global real-time information

- Their upcoming Grok 5 model will have 6 trillion parameters

- ChatGPT-4, which wowed everyone at launch, had about 1.8 trillion

That's not a small upgrade. That's a completely different league. And the real-world data advantage means their AI learns from life, not just textbooks.

The Benchmark Scores That Are Hard to Ignore

You might think this is all talk. So let's look at the actual test results. These numbers are striking.

AI Is Now Beating Doctoral-Level Experts

There's a test called GPQA Diamond. It contains 298 questions written at PhD level in biology, chemistry, physics, and math. These are the kinds of questions designed to separate true experts from everyone else. Here's how today's AI models performed:

- Claude Opus 4.5 scored around 87%

- GPT-5.2 Pro scored 93%

- Gemini 3 Deep Thinking scored 93.8%

Let that sink in. These are not general knowledge quiz scores. These are doctoral-level results.

The Coding and Job Performance Numbers Are Just as Wild

In real-world software engineering tests, the best AI models were stuck around 50% accuracy in early 2024. Today, Claude 4.5+ hits 80.9%. That's a 30-point jump in roughly one year.

OpenAI ran a test called GDP-Eval across 44 different jobs. It compared AI against the best human professionals in each field. The result? AI matched or beat human experts in 71% of tasks. We're talking lawyers, accountants, analysts, and marketers — careers that required years of education to enter.

What the World's Top AI Leaders Said at Davos

This is where things shifted from interesting to genuinely important. At the World Economic Forum in Davos, three of the most powerful figures in AI gave statements that surprised even seasoned observers.

Dario Amodei's Alarming Predictions

Dario Amodei, CEO of Anthropic, didn't mince words. He shared three major predictions with the assembled global leaders:

- AI will replace almost all software developer work within 6 to 12 months

- AI models will reach Nobel Prize level in multiple fields by 2026 or 2027

- Up to 50% of junior white-collar jobs could vanish within one to five years

He also revealed something personal. At Anthropic, engineers barely write code by hand anymore. AI handles it, and humans review the output. This isn't a future prediction — it's already happening inside one of the world's leading AI labs.

What Google and OpenAI's Leaders Are Saying

Demis Hassabis, CEO of Google DeepMind, is more cautious by nature. But even he admitted a 50% probability of reaching AGI — artificial general intelligence — before 2030. And Sam Altman at OpenAI recently stated publicly that his team now knows how to build AGI as it's always been defined. OpenAI, he said, is now focused on what comes after: superintelligence.

But Wait — There Are Serious Doubters, Too

Good journalism means giving you both sides. And the skeptics here are not small voices. They deserve your attention.

Why Some Experts Think the Hype Is Overblown

Yann LeCun is a deep learning pioneer and Turing Award winner — basically the Nobel Prize of computer science. He believes current AI models will never reach human-level intelligence. In his view, we need a completely different approach, not just bigger versions of what we have today. His skepticism reportedly made him unpopular enough at Meta that he partly stepped back from his leadership role there.

Even Hassabis himself admits there are still one or two major breakthroughs missing. Specifically, AI still struggles to learn quickly from just a few examples and reason effectively over long timeframes. And Musk has a track record of optimistic timelines that slip. Tesla's full self-driving feature was promised years before it became partially available in limited US regions.

- The "low-hanging fruit" argument suggests easy AI wins are already behind us

- Like drug development, each new leap may cost more and take longer

- AI could hit a plateau just like many previous tech waves did

Four Real Signals to Watch Right Now

You don't need to trust anyone's predictions blindly. Instead, watch these four concrete signals. They'll tell you what's actually happening far more reliably than any CEO's tweet.

The Indicators That Actually Matter

- Economic growth rates: A true singularity implies annual economic growth exceeding 20%. Today's fastest economies grow at 5–7%. Watch AI productivity data closely.

- AI self-improvement loops: Right now, AI helps design chips and optimize its own architecture. But it's not doing this fully autonomously yet. Watch for closed, self-directed improvement cycles.

- Benchmark saturation: When AI scores near 100% on every test — including creative and scientific reasoning — that's a major signal. We're almost there in math and coding.

- Brain-computer interfaces: Neuralink is already implanting devices in humans. When AI merges with the human body and controls physical robots like Tesla's Optimus, we'll be in genuinely new territory.

What This Actually Means for Your Life and Your Career

Let's get practical. Because all of this matters most in terms of how it affects your daily reality.

The Jobs at Risk Are Not What You'd Expect

McKinsey estimates that up to 30% of the global workforce could face automation by 2030. We're not just talking about factory floors. We're talking about radiologists, lawyers, accountants, and software engineers — roles that required expensive degrees and years of experience to enter.

The optimistic view says we're heading toward abundance. If AI handles the grind, humans get more time for creativity, family, and purpose. AI might also crack our hardest problems — cancer, climate change, food security. The pessimistic view says that whoever owns the AI will accumulate staggering power while everyone else falls behind. Honestly, both things can be true at once. What tips the balance is the choices we make as a society, starting now.

Three Things You Can Do Starting Today

- Use the tools: Try ChatGPT, Claude, and Grok yourself. Don't just read about them. Hands-on experience changes your perspective fast.

- Double down on what's human: Identify your strengths in empathy, creativity, leadership, and relationship-building. AI is not close to replacing those.

- Stay flexible: Your job may look very different in five years. That's not a reason to panic — it's a reason to keep learning and adapting.

In every major technological shift in history — the internet, mobile phones, personal computers — the people who engaged early came out ahead. The ones who waited until it was obvious to everyone often scrambled to catch up. This wave is moving faster than any before it. Waiting to see is no longer a low-risk strategy.

So here's my question for you: are you actively preparing for this shift, or are you hoping it slows down enough to deal with later? Drop your thoughts in the comments — I genuinely want to know where you stand.